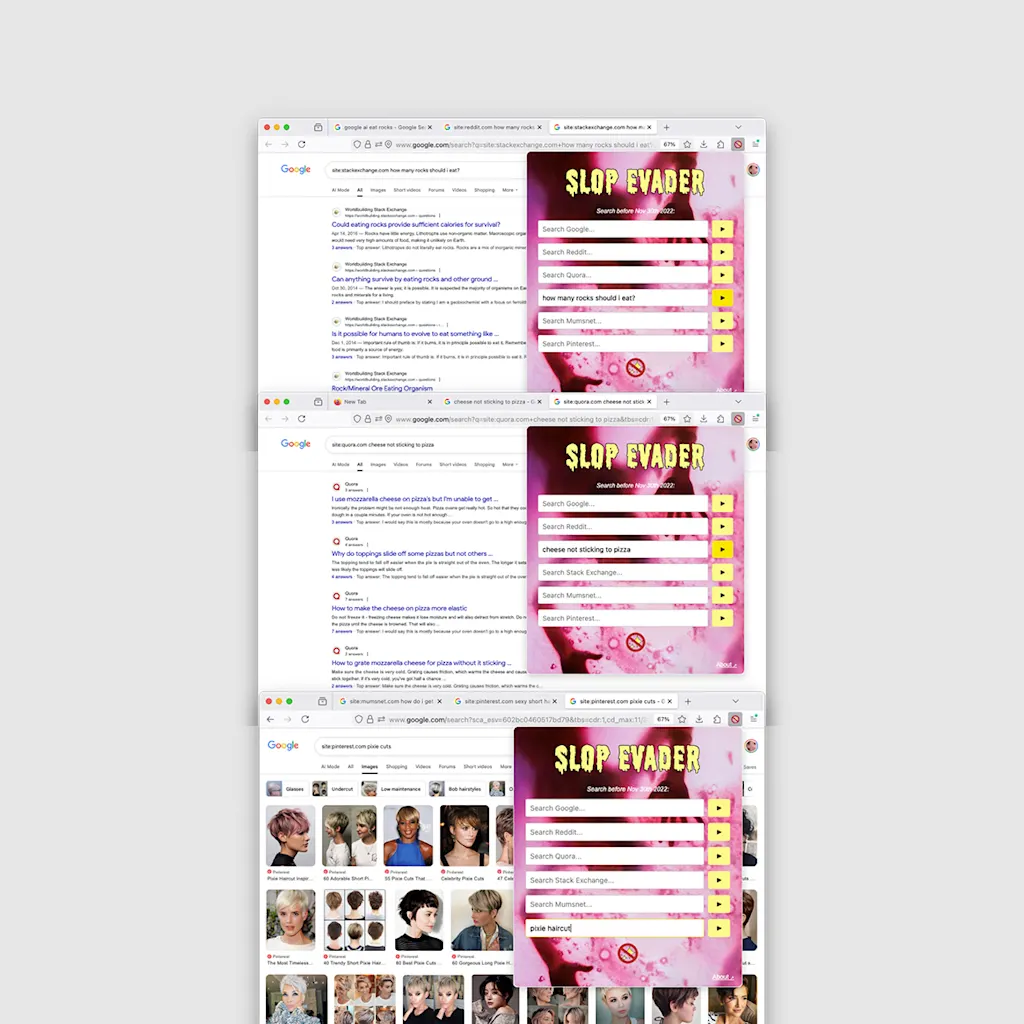

A new extension for Chrome stops AI slop from invading your life. Called Slop Evader, it is a temporal firewall that modifies your Google search queries to exclude any results indexed after November 30, 2022. That is the day the ChatGPT asteroid hit the open web, upending culture and reality as we know it.

Installing Slop Evader is easy: just add it to Chrome, toggle it on, and suddenly, the scroll of generative garbage vanishes. You are back in the “old” internet knowing that every article you read is not the product of simulated intelligence.

It’s an enticing idea, especially given that the latest estimation is that more than 50% of all new articles on the internet are now generated by AI. But the digitally Amish lifestyle has an obvious flaw: You aren’t just evading slop; you are evading legitimate news, scientific breakthroughs, and culture itself. Which, on second thought, maybe is a good idea too.

The Chrome extension works on a premise that is 50% brilliant, 50% useless, and 100% depressing. Slop Evader is a powerful statement, but not exactly a solution. It’s a vacation from the permanent doubt that comes from clicking on anything these days.

Kill AI switch

It doesn’t seem the AI slop will stop. Europol predicts that by 2026, 90% of online content could be synthetically generated. We are no longer surfing a web of human knowledge; we are drowning in a sea of hallucinations. We don’t need gimmicks. We need a way to access information without risk of being deceived by machines. We need a built-in toggle in every browser and platform to turn off the machine-generated trash. Since AI can no longer be detected by software, our only hope lies in proving what is real, not spotting what is fake.

There are already some efforts to do exactly that. For images, videos, and sound, the Coalition for Content Provenance and Authentication (C2PA) has proposed a digital birth certificate for every pixel and sound wave you encounter. The technology already exists to cryptographically sign media at the point of capture, creating an unbroken chain of custody from the camera lens to your screen.

When I spoke to Ziad Asghar—SVP of product management at Qualcomm—to talk about the end of reality, he told me that fake audio and video content is a big concern for everyone. “As these [AI] technologies become more prevalent, this is going to be a challenge,” he says. He was right two years ago, he is even more right today. We need content that works like NFTs, using blockchain-like certificates to prove a video or an article wasn’t hallucinated by a GPU.

Qualcomm has successfully integrated C2PA support directly into its Snapdragon 8 Gen 3 mobile platform. The chip uses cryptography to sign the actual pixels of your photos the moment you snap them. Sony, Canon, and Leica have also rolled out firmware updates that sign images right at the point of capture. If you shoot with a Sony Alpha 9 III or a Canon EOS R1 today, you can generate a tamper-evident digital birth certificate for that file.

The problem is that most platforms shred this information when you upload. There are exceptions. TikTok supports C2PA and tells users what content was actually captured by a camera. Google has started integrating C2PA into its Search and Ads platforms, allowing the ‘About this image’ tool to verify provenance. And LinkedIn has the best option: The company says that it overlays an icon on C2PA-signed images that users can click to inspect the edit history.

So it can be done. If there was another certification standard for other types of content—like this article—and if every platform supported the standards in full, users would be able to push the Kill AI switch.

But, of course, you know where this ends.

Yeah, it will never happen

The same tech giants adopting these standards are simultaneously playing a cynical double game. While Meta and TikTok claim to be cracking down on AI slop by downranking third-party generated content, they are aggressively pushing their own AI tools. TikTok limits the reach of external AI videos while actively encouraging you to use its in-app AI filters. Meanwhile, Meta says it will “throttle down” AI content promotion, but they give users AI tools to create an eternal tsunami of slop.

They aren’t trying to save the internet from AI. They are trying to secure their own monopoly on AI pollution. They want to ensure that the only slop you consume is the premium, high-margin slop they generated for you. It is all about revenue domination. If you use Midjourney, you are a spammer. If you use Meta AI, you are a “creator.”

We can’t expect the companies profiting from AI creation to give us tools to deactivate the very content we create on their platform. So it seems, for now, our best (and imperfect) bet is something like Slop Evader—a time machine to transport you to a simpler time.

source https://www.fastcompany.com/91461264/slop-evader-ai-slop-browser-best-idea-of-2025

Discover more from The Veteran-Owned Business Blog

Subscribe to get the latest posts sent to your email.

You must be logged in to post a comment.